What you need to know about resiliency

How you can really improve the resiliency of your software and what you need to do

Developing software is easy. Just implement a few features, add a few tests if you feel like it, and that’s it. Or is it? Well…it depends.

Let me tell you a story:

There once was a team that built software like many other teams. They implemented features, they tested thoroughly, they even had regular load tests, they added all kinds of alerts, and they monitored their software closely. Their software ran on Kubernetes and was thus deployed as a container on a pretty large cluster. Their deployment strategy was simple: Just a rollover of the deployment. Release a new version, use Helm to trigger the rollover, and let all the magic within Kubernetes do its thing.

The software did not have many dependencies. It just needed a Redis to store some data inside, and that was it. Get a request, make a Redis lookup, do some more processing, and then return a response. Not much that could actually fail.

Everything ran fine for one and a half years, until one Saturday evening, they got a call from a Vice President. Everything was down because their service didn’t respond anymore. Something was odd. All requests took thirty or more seconds. All clients only showed the loading placeholders of doom. Customers were unhappy. Management was unhappy. Nothing worked.

What had happened?

As it turns out, a massive spike in traffic had actually overwhelmed the network interface of the ElastiCache cluster (in the end, just a Redis with an interface in front of it) the team used to store their data in. Additionally, the cluster had so much data that the machine began to swap memory. This meant that all Redis commands took forever to finish. And this, in return, meant that everything took forever.

But how could this happen?

It turns out that the Redis connection was just a plain Redis client, and the logic worked as follows:

A request comes in

Take a connection from the pool

Send the Redis commands

Get the responses

Return the connection to the pool

Process the responses

Send a response to the client

Just plain logic. No fallbacks. No safety measures.

Spot the issue? Yes. A feature that had worked for one and a half years didn’t work in one particular situation. A feature that had been load tested thoroughly and never broke finally gave in and broke. It broke in a situation with so much entropy that no one could ever have come up with a suiting load test to reproduce such a scenario reliably. Nevertheless, it happened.

A very important lesson about resiliency

The short story above is just an example, but scenarios like this one happen probably every day around the world.

They teach us one crucial thing, however:

We actually don’t build software for 99.99% of cases. We build it exactly for the 0.01% of cases where something really breaks. Or better: We should.

But, resiliency is difficult. Our job as software engineers is not done when we think it’s done. It’s done when we’ve put in our best efforts to protect our software against nearly unimaginable failure scenarios. And we can be sure of one thing: We will never get it right. This is why I said “best efforts,” because failure is inevitable.

Even with a lot of experience, you will still overlook a possible edge case from time to time. What really counts in these circumstances is all the measures you have taken at least soften the blow as much as possible. And even these take a lot of experience and imagination to come up with.

Next to that, resiliency ≠ resiliency. It’s a situational thing. Some applications need more resiliency than others. A static website that you deploy to Cloudflare Pages, Netlify, or Vercel needs way less resiliency than a huge streaming portal with many moving parts and hundreds of micro-services, deployed to Kubernetes on AWS. The static website already profits from what the serverless hosters offer, the streaming portal is so complex that it needs a lot of custom-tailored solutions to increase its resiliency.

Lastly, some measures to increase your resiliency cost way more than others. At some point, the decision about whether to increase your resiliency becomes a matter of your available budget. And more often than not, you will probably have to decide to not make your application more resilient because it’s not economical anymore.

Actually, there is a pretty simple rule about resiliency and uptime, which goes as follows:

Achieving 99% uptime is easy. 99.9% uptime is already exponentially harder and more expensive. Every additional 9 you want to add to the decimal places of your uptime will cost you exponentially more than the previous one.

And finally, you will never be able to make any system so resilient that it will forever stay at a 100% uptime. It’s impossible. It will never happen. There is always a single point of failure that you can’t make more resilient. If you try to, you create another single point of failure. If you try the same procedure again, you end up where you started. You’ve basically reached the end of it. At some point, everything else is out of your control.

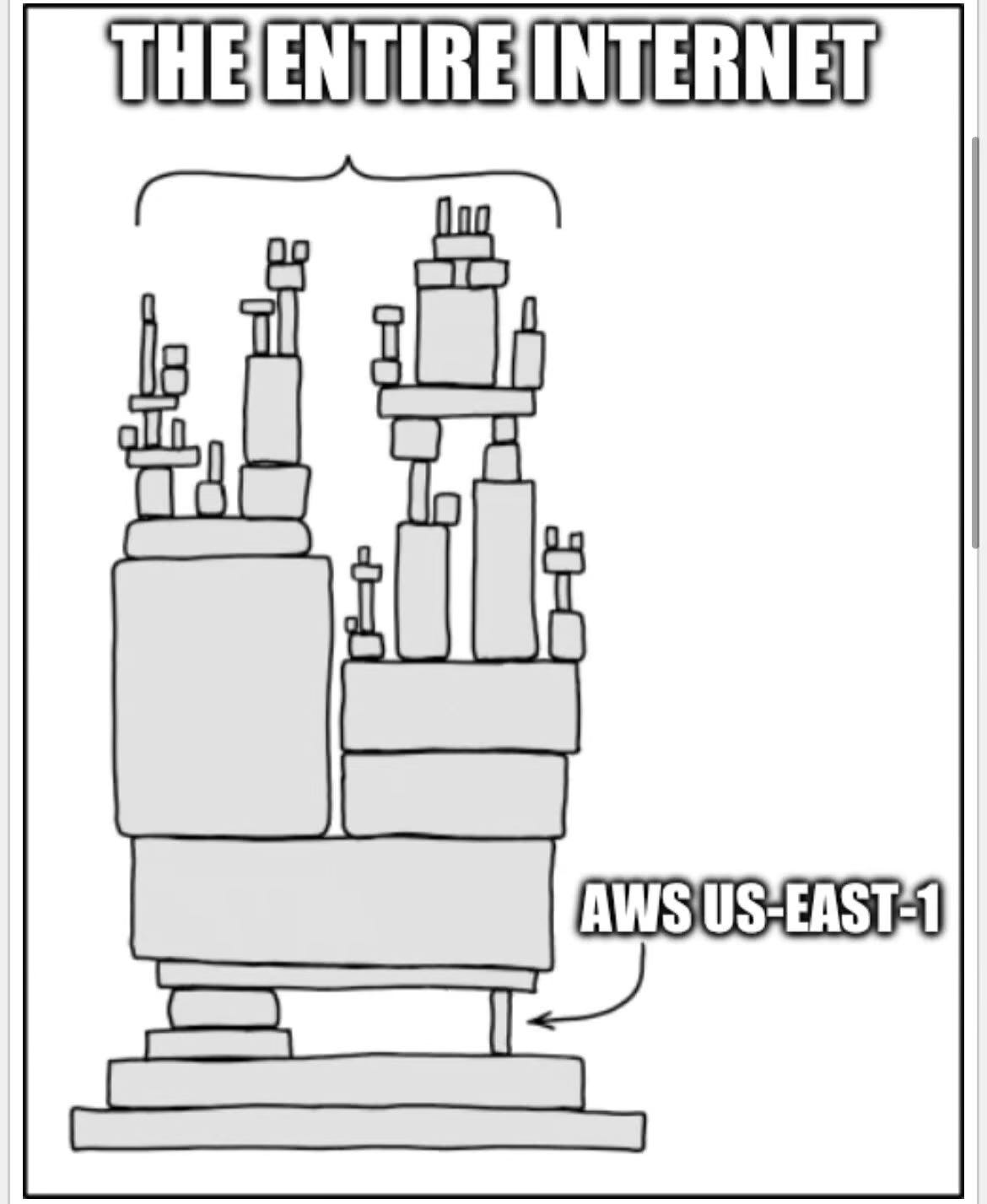

There exist a few memes for a reason. One example is one about AWS’ us-east-1. Even a company like Amazon can’t eliminate all its single points of failure. If us-east-1 is down, many other regions and services go down.

This proves one point:

Resiliency is difficult, and sometimes, you just can’t get it right.

A few examples of what you can do to improve resiliency

Although you can never get to 100% uptime and completely resilient systems, it’s still worth investing in the parts of your system where it makes sense. Sometimes, it doesn’t take much to improve the resiliency of a single service so much that any further investments make no more sense. The rest is just the risk you need to live with.

But let’s take a look at a few examples of how you can improve the resiliency of your software, even on a smaller scale.

Circuit Breaking

Circuit Breakers are one of the most important resiliency patterns out there, and yet they are not used often enough. They would also have been a pretty great way to prevent the issue of our small example from the beginning.

In electronics, a circuit breaker is an intended breaking point. In case of an overloaded electric circuit, a ground fault, or a short circuit, the circuit breaker trips intentionally and prevents further damage to devices and the system itself. The circuit breaker itself is designed in a way that it can break without becoming broken. It can trip over and over again (at some point you have to replace it, but that usually takes forever).

Circuit Breakers in Software Engineering

In software engineering, a circuit breaker works similarly. A method or remote call is protected by a circuit breaker that constantly measures the behavior of what it protects. If one of the metrics the circuit breaker observes exceeds a certain threshold, the circuit breaker trips temporarily to prevent further damage to the system. After a while, the circuit breaker slowly opens the flow again for a percentage of all calls, while observing its metrics. If the metrics stay below the threshold, the circuit breaker opens up the whole flow again. Otherwise, it interrupts the flow again.

Conceptually, a circuit breaker in software engineering has three states:

Closed

Half-Open

Open

Closed State

In this state, everything works normally. Closed in this sense is more to be seen in the sense of a normal circuit breaker in electronics. As long as the circuit is closed, electricity (or requests in software engineering) flows.

Half-Open State

In this state, the circuit breaker has already tripped. It was previously open and now closes the circuit only for a certain percentage of requests to see whether everything has gone back to normal. If so, it goes into the closed state again to allow calls to flow through. If not, it opens and prevents all requests again.

Open State

In this state, the circuit breaker has tripped. One of the metrics the circuit breaker observes has gone over a certain threshold and all further requests are rejected temporarily.

Additional traits

Unlike a circuit breaker in electronics, the one we use in software engineering has a few additional traits. Instead of only the electric current, it can observe multiple metrics. Usually, a circuit breaker has at least support for measuring and handling timeouts, exceptions, and other errors. Additionally, most circuit breakers allow for a fallback. This fallback is used whenever the circuit breaker trips.

What a circuit breaker can be used for

Circuit breakers are usually used to protect remote calls. In distributed systems, other services and components are usually way more likely to break than internal components within a service.

A circuit breaker can protect calls to upstream services, but also to databases. Sometimes, it also makes sense to protect calls that are otherwise internal but make use of system resources, especially in containerized systems. If a service uses an SQLite database that is mounted through a volume inside a Kubernetes cluster, it can indeed also be worthy of being protected by a circuit breaker.

Usually, a circuit breaker wraps a function call, which itself performs a remote call (or accesses the file system, like an SQLite database).

Common misconceptions about circuit breakers

A circuit breaker doesn’t magically make everything better. It also doesn’t prevent issues from occurring. In fact, it actually makes things fail faster and it also creates another category of errors. But in the end, these are intended measures to soften the blows of failure.

Many engineers are afraid of intentionally letting things fail. They work under the assumption that sending a response somehow (even if it means blocking resources for tens of seconds) is better than showing users an error. But this assumption is wrong.

The reality is that protecting the system for at least a certain percentage of users is better than letting it fail for everyone. If a circuit breaker in your service gives another service time to breathe and recover, that’s better than continuing to put that other service under pressure until it completely fails.

More often than not, you can implement a reasonable fallback. Sometimes, serving static fallback content can save the day. It might not be the most accurate content and may not be personalized, but once again: Something is better than nothing. And even if you cannot reliably serve any fallback content, you can still ensure that your application only fails for a certain percentage of users.

Circuit Breakers as Infrastructure

If you work with Kubernetes and deploy containers to clusters, you have even more choices. A good Service Mesh can provide circuit breaking capabilities out-of-the-box, for example.

Services Meshes are designed and implemented to deal with the chaotic nature of distributed systems. They control how data flows and help with exactly that. Most of them provide sidecar proxies that additionally come with built-in circuit breakers. And usually, you can fine-tune these with resource definitions and tailor them to each individual upstream service. The sidecar proxy intercepts your outgoing traffic, proxies it, and handles things like circuit breaking for you, while returning the response as if nothing had ever happened.

Deploying a service mesh and actively putting your services inside that mesh often frees you from having to manually implement circuit breaking for upstream APIs. It may not free you from implementing them for your database calls, though. But still, if you configure them correctly, you don’t have to write code yourself, which alone is already worth a lot.

Istio, for example, comes with an excessively configurable circuit breaker. You can add a so-called DestinationRule for each upstream, which additionally frees you from having to rebuild your software only because you want to reconfigure one of your circuit breakers. Changing the configuration only involves updating a resource definition within the cluster, which is usually way faster then rebuilding your whole software and deploying a new container.

Dealing with Backpressure

Just try to imagine that someone pushes you a few times and at some point you fall. But when you try to stand up again, that someone continues to push you. Standing up becomes nearly impossible at this point. This is too much backpressure.

Every service or component in a system has a certain load it can take before it breaks. Even if scaled horizontally, there are situations in which single instances of a component cannot reliably take any more. In this situation, they are just overwhelmed by the requests they get and give in at some point. When this happens, recovery is often difficult, especially when the requests don’t stop.

Interestingly, many software engineers still ignore handling backpressure and instead focus on other things. They do nothing to protect their services and components. If their services get overwhelmed, they have a hard time trying to recover the system.

Handling Backpressure

Although circuit breakers exist, you should never rely on everyone playing fair. Maybe some of your colleagues didn’t have the time (or sometimes will) to implement a circuit breaker with reasonable timeouts and a back-off strategy for calls to your component. Sometimes, clients are not under your control. On the internet, everyone can call a public API, and even if it’s authenticated, it doesn’t protect you against getting requests at all.

It’s your job to find out at which point failure is imminent, by, for example, load testing thoroughly. You need to find important metrics your service depends on. Perhaps a component is CPU- or Memory-bound, or it’s actually depending on the amount of file descriptors available. These are metrics you can actively observe, even within a component itself.

You need to discover thresholds at which a component needs to say: “No more.” Depending on where you deploy your software, you might even need to find multiple. On Kubernetes, for example, you can gather one set of thresholds at which Kubernetes should direct no more traffic toward a pod (that’s the readiness probe), and one set of thresholds that puts a service into emergency mode, stopping most of the work and actively rejecting requests. While the first set’s values should be below the values of the latter, both should still be a little away from the point of complete failure.

Handling Backpressure in HTTP Services

HTTP has a strategy to tell clients to “back off,” HTTP status codes and HTTP headers. Although it’s no requirement for a client to implement, it’s still a good first start for you because at least a few HTTP client libraries respect it.

There are two status codes that you can use for this purpose:

503 Service Unavailable

This indicates that the service is not ready to accept any traffic at the moment (which means that no one can currently be served)

429 Too Many Requests

This usually indicates that a specific user has sent too many requests and is probably being rate limited (which means that other users may still be served)

Additionally, there is the Retry-After header that you can use to give your service some room to breathe (at least from clients that respect it).

If an instance of your service detects that it’s close to collapsing, it should actively start to reject requests. In this case, you can decide between one of the two status codes, mentioned above, to return. In most cases, if you don’t selectively rate limit, a 503 is the better choice, though (at least semantically).

Additionally, you can set the Retry-After header to one of two possible values:

A date

An integer value in seconds

If you set a date, some clients will respect this value and only try to issue a new request after the date specified. In case of an integer value, clients are asked to retry the request after the amount of seconds specified within the header has passed. If your service only receives requests from components inside a closed system, you can require everyone else to honor this status code and header combination (or even make it a standard for everyone in your company).

The important task for you, however, is to implement a backpressure strategy at all and as early as possible. Rejecting a request if your service can’t take any more should happen before it spends significant resources trying to process a request. This usually means implementing this kind of logic in a middleware layer.

If you place these security measures as early as possible in the call chain of any service, it becomes less important whether clients honor an HTTP status code and a Retry-After header. Clients respecting it are just a nice added bonus on top. Rejecting most of the work as early as possible still gives your service more room to slowly recover. If your service is deployed on Kubernetes, it can additionally intentionally signal that it’s not ready to receive traffic by returning a non-successful status code at its endpoint for the readiness-probe. Kubernetes will then shift traffic to other pods until the readiness-probe signals a recovery by returning successful status codes again.

Handling Backpressure in Other Components

For all other components that are no HTTP services or similar, there are usually other strategies. Sometimes, they are directly baked into the component itself, sometimes S/P/IaaS providers provide them for you, and sometimes, you need to handle it yourself from the outside.

For these components, it’s important to do some research. You need to find out whether the components you want to use have a backpressure strategy in place or whether there are additional components (like proxies) that you can deploy to do it for you.

If there is nothing available, you probably need to implement a strategy in components that are under your control. For a database, that means circuit breaking all requests actively and trying to allow that database to recover if its overwhelmed. Often, this includes especially configuring timeouts because long-taking queries can be a good first indicator of overwhelm.

In the end, you need to get creative when dealing with such components because there are so many different of them. It can also take quite some time to come up with a suitable strategy. Nevertheless, you should invest time and resources into it because when things really get bad, you’ll profit off that investment massively.

Caching

Caching usually has different applications, but it’s also a good way to increase the resiliency of software components. Caches (whether in-memory or remote) are usually faster than calling the sources of their data. Calling a Redis to get some data is, for example, often faster than performing an SQL query, which provides the source of that Redis’ cached data. Having an in-memory cache in front of your Redis in return is faster than issuing a call to your Redis at all. Having an application cache in front of all your logic can save your service from performing too much work overall.

Which types of caching you should implement does, of course, depend on your specific use case. Sometimes, caching your database data is enough, sometimes, an in-memory cache is enough, and sometimes you need it all. Nevertheless, all strategies have their own use cases.

Caching Upstream Responses

Caching upstream requests is pretty common. In fact, the HTTP spec even encourages it by providing a Cache-Control header. Any upstream service can send such a header with its response and tell you how you are allowed to cache the response. Browsers also honor the header and cache responses accordingly.

The spec is quite extensive and the header itself has many fields, but all of them have a right to exist. There are also quite a few HTTP clients that already have their own caching mechanisms that work exactly by processing this header. But even if there is not, you can at least partially implement a caching mechanism yourself that processes the Cache-Control header.

Caching directly impacts your system’s resiliency by decreasing the number of requests your upstreams have to handle. If they correctly set their Cache-Control header, they allow you fire less requests against their API because you can reuse cached responses for as long as they are valid.

Another mechanism that even improves your whole system’s resiliency is the stale-if-error field of the Cache-Control header. By setting this field to a positive integer, an upstream service allows you to reuse a cached response, even if its stale (which means it’s already older than its max-age field allowed), in case a call to it returns a 5XX HTTP status code (in other words, an error). This means that even if an upstream service you need to function properly is down or experiences other issues, you can still continue to serve at least some requests.

Caching Database Results

Depending on which database you use and how you store your data, you might deal with more or less scalable components. But even a NoSQL database can become pretty slow if you issue many complex queries (given that you can’t optimize them further).

Caching database results is usually a little more complex than caching upstream responses. Databases don’t send a Cache-Control header that tells you how long you can safely cache their responses. Even worse, you either have to make an educated guess and decide for yourself how long you think you can safely cache a query result (But keep in mind, data tends to change. Sometimes even rapidly), or you need to implement some form of eventing and leverage database triggers to notify all clients to invalidate specific cached entries (which is obviously not trivial and adds a lot of complexity).

Caching query results can greatly reduce the load on a database and give it some room to breathe. It naturally reduces the load a database has to handle because queries are not always issued but only when really necessary. Additionally, if implemented carefully, cached results can also be used to still serve requests even if the database is down. This usually won’t make all requests succeed, but a few are still more than none at all.

Nevertheless, database caching isn’t simple. That’s sure, and there are also other alternatives you should try first (you will learn more about them in the next strategy, further down below), but you should not forget that you can and should (if necessary) also consider caching database query results.

Distributed Caching

Most services you deploy aren’t isolated, lone components. Nowadays, we usually scale our software horizontally. Either by leveraging serverless offerings, or by deploying to Kubernetes. Horizontal scaling is just easier than thoroughly optimizing our code or scaling vertically by adding more and more hardware (although I have heard that these multi-million Oracle Database servers with Exabytes of RAM and hundreds of CPUs still exist).

Scaling horizontally comes at a cost, though. Instances don’t share their memory. An in-memory cache thus doesn’t work reliably. In this case, you can either accept that you have to do certain work n times (n being the number of instances of your service), or you can reduce the load on upstreams and databases even more by providing cached results to all instances of your service.

A common pattern is to deploy a Redis or a Memcached, which acts as a distributed cache. When one instance of a service makes an upstream request or sends a database query, it puts the result into one of these distributed caches and thus makes it available to all other instances. This can greatly reduce the number of requests all instances make overall, which in return improves resiliency once again. And in case of any errors of upstream components, all service instances can reuse the cached data to respond to requests for as long as they are allowed to.

In-Memory Caching

In-memory caching is the easiest (but sometimes also hardest) form of caching. Cached responses can simply be stored in-memory and reused from there whenever necessary (or possible). Memory-based caches are also the fastest caches available because there is nearly no overhead involved. Accessing memory is just faster than anything that involves network calls (which also includes leveraging distributed caching mechanisms), and it’s still faster than accessing the file system (which you can theoretically share among pods in a Kubernetes cluster, but I’d really advise against it unless you really know what you are doing).

A common pattern is to put an in-memory cache in front of your distributed cache, which is put in front of any cacheable remote call. The call chain then usually works as follows:

Try to find a cached result within the in-memory cache

If found, return that result

If not found, try to fetch a cached result from the distributed cache

If found, return that result

If not found, try to fetch a fresh result from the upstream component

If found, return that result and put the result into all cache layers

If not found, use a fallback strategy or else

Next to improving the overall response times of your service, in-memory caching, once again, adds to the resiliency of your component. Even distributed caches can experience issues and outages. Having at least a few cached responses available in-memory allows you to serve a few requests instead of none at all.

Application-Level Caching

Application-level caches sit in front of all business logic inside your service. They can be leveraged before you even have to put in any compute resources to calculate or fetch a response for your caller. Right at the beginning of processing a request, you can make a lookup in all your different tiers of caches and see whether you already have a pre-calculated result. If so, you can return that. If not, you can still put in effort to compute a new result (which can then, in return, be stored inside your application-level cache, so that you save computing power when the next request asking for the same result comes in).

As you can probably imagine, this reduces the load on all upstream components even further. You won’t usually end up with a 100% hit rate, but every percent counts. And once again, if some components are down, your application-level cache might still make someone’s day because they get a result instead of an error.

Deduplication

Deduplication is a strategy that tries to prevent the same work occurring concurrently. To better understand the problem deduplication solves, imagine the following scenario:

Three clients make a request to a service at the same time. All three requests hit the service at roughly the same time. They all request the same data. All of them are processed at the same time. What now usually happens is that the service still does the same work thrice (except there are some locking mechanisms preventing them from doing so, but that’s usually not the case). If instead of three clients, you have to deal with a few thousands, the problem quickly becomes clear: Although you are probably already caching results and doing everything else, you still have to deal with a few thousand, non-synchronized requests.

In-Memory Deduplication

Deduplication tries to solve the issue of dealing with non-synchronized requests. In its simplest form, deduplication creates an in-memory queue handling requests to your service. All requests that ask for the same data get queued up. The first request that hits your service in that chain creates a new task within that queue, while synchronization mechanisms prevent other requests from advancing further in the processing chain. After the task is created, the first request gets subscribed to the result of that task. Then all other requests that ask for the same data can advance further, but instead of starting a new task, they also subscribe to the task that the first request created. Then, all work to complete the task is done exactly once (including looking up a potentially cached result in all caching layers), eventually cached (if computed again), and its result then returned to all requests subscribed, which means that all clients get the same result, which only had to be computed exactly once.

Deduplication requests does indirectly increase your service’s (and thus systems’) resiliency. It directly reduces the computing power you need to serve requests and it also saves upstream components from having to put in unnecessary duplicate work. The less work a system needs to do, the less likely it becomes for it to break at all.

Distributed Deduplication

In a more complex form, deduplication can even be performed in a distributed manner. Discord, for example, decouples requests to its ScyllaDB cluster, which stores all messages for all Discord channels, by leveraging proxies and so-called data services.

First, a proxy distributes requests for channel messages by a routing key and then routes all requests with the same routing key to a specific instance of a so-called data service. These data services implement an in-memory queue (as described above), which ensures that requests for the same data only get processed exactly once at the same time. Within these data services, the corresponding queries are implemented to fetch certain types of data, which, in this case, only have to be sent to the database cluster once instead of multiple times.

This is only a small addition to the in-memory variant of deduplication (as described above), but the additional routing proxy ensures that the same work is really only done exactly once, and not by multiple instances of a service at the same time.

Admittedly, distributed deduplication is probably something you only need to do at a very large scale. But it’s still nice to know that there is a strategy available, should you ever find yourself working in a scale that justifies even measures like this one. You can view it as the royal class of deduplication with most benefits for resiliency.

Failover

Even the most sophisticated methods of increasing resiliency we have already taken a look at can’t save you from all kinds of failure. Sometimes, components just break, no matter how much work you save them from. Infrastructure components or databases can just die out of the blue, and sometimes a whole data center loses power (like Cloudflare just experienced, which in return brought all of npm down).

Failover is a strategy that employs a backup in case of an outage or emergency. A failing database, for example, can, in such a case, be replaced by a failover cluster that has been synced in the background all the time. If the main database fails, the failover automatically (hopefully) takes over.

There are multiple strategies for failover, but the most prominent one nowadays is probably Multi-AZ (short for Availability Zone) deployments.

Multi-AZ Deployments

All major cloud providers provide so-called availability zones (sometimes just called different because … marketing) within their regions. These can be separate data centers (located geographically close to the main data center), or just other rooms within the same data center. No matter how a cloud provider implements them, they all usually have their own networks, power supplies, etc. This improves the chances that even if something fails in one availability zone, others can take over because they are all separated from each other.

On AWS, for example, you can deploy everything in multiple availability zones. Even the nodes of the same Kubernetes cluster (usually just EC2 instances) can be hosted in different availability zones. Pods can then be spread among the nodes of different availability zones evenly (or oddly, or however you like. It’s fully configurable) by making use of topology spread constraints and else. All databases you can deploy on AWS, of course, also have an option for a Multi-AZ setup. In case of failure, they offer an option for an automatic failover strategy (which you still have to explicitly activate, though).

Multi-AZ deployments throw money at a problem that doesn’t often occur. But if it occurs, you’ll be happy to realize that your investment has paid for itself. The issues covered by this strategy are usually something completely out of your control.

Canary Deployments

Even if you implement all of the above strategies, there is still a pretty high chance of you just introducing good old bugs. Mistakes just happen. But gladly, you can protect yourself to some extent.

While there are also other deployment methods that have the same goal in mind (reducing the impact of deploying broken software), canary deployments bring the most automation to the table, so we will take a look at them representatively.

Conceptually, a canary deployment is a form of an automated rollover strategy with progressive traffic shifting. Instead of just rolling over a deployment (replacing the old software with the new one step by step), a canary deployment rolls over a deployment progressively and slowly and automatically shifts and divides traffic between a stable and a canary deployment.

How a Canary Deployment Works

A Canary deployment works as follows:

A new version of a software is deployed

The old, stable deployment still remains active

Automatically, a small percentage of traffic is shifted over to the new deployment

The shift can either be sticky (session-based, for example) or happen randomly. That’s usually configurable.

While traffic is shifted over to the new deployment, a controller closely monitors key metrics like error rates

If all observed metrics stay below a configured threshold, more and more traffic is gradually shifted over to the new deployment

If during this process, certain thresholds are broken, the traffic is automatically and completely shifted back to the old deployment

If no thresholds are broken, traffic is slowly shifted over until it hits 100%, which makes the canary deployment the new stable deployment

How a Canary Deployment Improves Resiliency

Even if a new deployment has bugs, it can never bring the whole system down. Due to the (usually) automated nature of the process (given that everything is configured correctly), issues are spotted early before they affect too many users. The whole system can usually never collapse due to one broken deployment.

Additionally, a canary deployment allows for rapid development. New features can quickly be tested in a production environment, which usually yields more useful data than synthetic tests. This indirectly improves the stability of the software because it’s tested against real traffic more often. Importantly, it also allows smaller chunks to be deployed more frequently. The smaller a release, the less likely the chance of major issues becomes. More features that have never seen the reality of production just increase the chance of bigger outages due to multiple bugs affecting each other.

You have (finally?) come to the end of this issue, so let me tell you something:

Thank you for reading this issue!

And now? Enjoy your peace of mind. Take a break. Go on a walk. And if you feel like it, work on a few projects.

Do whatever makes you happy. In the end, that’s everything that counts.

See you next week!

- Oliver